A thin line between fake and reality

In the midst of the Ukraine war, a video of Ukrainian President Volodymyr Selenskyi circulated on the website of a news channel. He wears a uniform and looks seriously into the camera. In his digital address to Ukraine's citizens, he calls for them to lay down their weapons and return to their families. Just moments later, another video message from the president appears on his personal social media account. There, Selenskyi demands that Ukraine should and will continue to defend the country. If anyone should lay down arms, he says, it should be the Russian military.

In these two contradictory speeches, the Ukrainian president is clearly visible. His mouth movements, facial expressions and gestures match what he says. But only the video message on Selenski's own social media channel is genuine. The video published on the Ukrainian news channel is a deepfake.

What is a deepfake?

A deepfake is an image or video created using artificial intelligence that looks authentic but isn't. "Deepfakes are a type of mask. Through artificial intelligence the face of a real person is replaced by that of another person," explains Christian Muth, founder of the communications agency muthmedia. The agency uses artificiaI intelligence (AI) to implement communication concepts for clients. In doing so, they generate exceptional audio, image, and video worlds.

"The better the source material, the more authentic the deepfake looks," says Normann Seidel, CTO at muthmedia. And these genuine looking deepfakes become dangerous when they are used to manipulate Internet users or to spread Fake News. Selensky's deepfake video is not an isolated case. More and more often, manipulated video recordings of politicians, celebrities or private individuals appear on the web and with improving technologies it is becoming increasingly difficult to tell whether the footage is real or fake.

The makers of deepfakes pursue different goals in the process. "But only in the rarest cases do the creators have something good in mind. Often, the motivation is to harm, humiliate or manipulate others," says Christian Scherg, communications expert and founder of the reputations management agency REVOLVERMÄNNER. The AI-generated videos are not only used to twist politicians' words or to manipulate elections. A large number of these digital manipulations is also found in pornography, where celebrities or private individuals are victimized. In addition to the victims, deepfakes affect others as well, as Scherg explains: "At the end of the day, the material harms at least one person, which is the person who is depicted on it. But it also harms others because they fell for it".

For Scherg there is also another issue that needs to be highlighted when talking about deepfakes. The question is always where to draw the line, he says. "If I take things out of context and then edit them into a different context, it can already be a deepfake," Scherg explains. Suppose a politician from a left-wing party gives an interview in which she says: "Here in the village there are some people who say: 'I am conservative and therefore vote for a right-wing party'." If one now cuts out only the second part of this statement and publishes it in such a way as quotation, readers would think that the politician spoke about itself. Many left-wing party voters would reproach him and the party. The fact that the statement is torn out of context and was not made at all in this way represents the problem addressed by Scherg.

The issue is so complicated and dicey because of the infinite possibilities for use. “And the more often a deepfake is viewed and downloaded, the more dangerous it becomes. Because these videos are uploaded again and distributed to an even larger audience,” emphasizes Scherg.

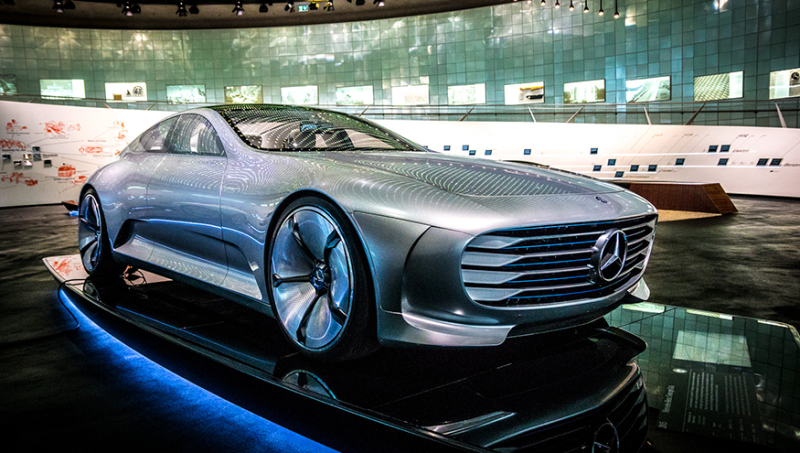

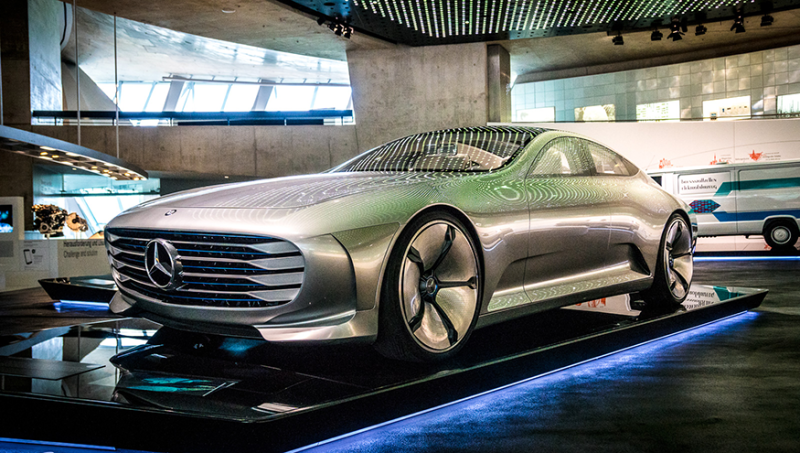

But for many people, AI-generated footage holds a giant fascination. This explains the great success of the Avatar film series, in which actors become new creatures through deepfake technologies. Technological progress opens new worlds, and the limits of human imagination are broken down. "With deepfakes, we create art," Muth says. For him, the deepfake technology is a tool comparable to Photoshop or video editing programs. "Deepfakes offer an alternative approach for us. We can use it to create something new. Something that doesn't exist yet. Often in the media industry, people are stuck when it comes to using new tools," explains Seidel.

For websites, blogs, social media, flyers, posters, or brochures – muthmedia uses AI on various channels. Depending on the context, they do not necessarily see the need to label the deepfakes as such, to make it clear to the audience that it is not a real recording. "Actually, with our concepts, it's always clear from the context that it's a deepfake. Mostly they are provided with a wink," says the agency founder. What makes he AI-generated campaigns so valuable for the muthmedia agency is that it does stand out and thus is intended to give companies a competitive advantage.

The muthmedia Agency creates communication solutions for companies in the field of moving images. A large part of their work is creating concepts with AI-generated image and video elements. The Frankfurt-based agency aims to create new possibilities for visual communication with technologies like Dall-E 2, Stable Diffusion and Co. The team also continues to develop technologies themselves to be a pioneer in AI-generated concepts.

Muth and Seidel are aware that deepfakes should also be viewed critically. For them it is undisputed that there are people who use deepfakes to manipulate or spread fake news. "I like to draw the comparison to a kitchen knife here. Principally, a kitchen knife is not dangerous. Unless it gets into the hands of the wrong person. And that's exactly what the problem is with deepfakes", Muth describes. Deepfakes aren't dangerous as long as they aren't misused. It's not the tool that's evil, but the intention and the purpose of the people using it.

In the digital world, we will encounter deepfakes more and more often, and they will become increasingly difficult to identify. For Muth and Seidel, a law that regulates deepfakes or even bans them does not seem to make sense in order to minimize the risk. "After all, kitchen knives are not banned either," Muth counters. Seidel, too, believes that media re-education and awareness is a better approach to counteracting the deepfake-misuse. It should also be remembered that technologies that can detect deepfakes are constantly improving as well. In the future, it will probably be possible to install plug-ins that directly expose a deepfake as such.

How not to be fooled by a deepfake

Scherg is also in favor of raising awareness, especially with regard to the media literacy of young people. "It is almost impossible to detect a deepfake with the naked eye. Especially with the wealth of information and the intensity with which such videos would have to be examined," he points out.

Rather, he says, it's about people asking themselves again, "whom and what source do I trust." Even though Scherg sees deepfakes as an opportunity to make good things with artistic pretensions as well, the negative aspects outweigh the positive overall. "The moment I use a deepfake without labeling it as such, it's an unpredictable danger." That we will have to get used to deepfakes in the future is out of the question for the communications expert. However, Scherg is positive about one thing: "With every piece of dubious information that is exposed as such, the value of serious information increases. That's why I'm almost grateful for every fake."

Christian Scherg is a leading German communications expert and advises companies, politicians and private individuals with his company REVOLVERMÄNNER. As a reputation manager, his work focuses, among other things, on the reputation of people and companies that have fallen victim to deepfakes.